You've probably heard voice assitants and pictured something like Siri reading out your calendar.

A bit robotic. Slightly wrong. Mostly annoying.

That's not what we're talking about here.

GPT-Realtime-2 is quite a different thing and technology. If Realtime one was impressive to conduct somewhat meaninguful conversations, Realtime-2 brings multi tasking to the table as well.

What is GPT-Realtime-2 and how does it work?

Standard TTS, text-to-speech models take text and read it out loud with almost zero latency. That's the whole job. You write something, it speaks it. Static input, spoken output. It doesn't understand anything. It doesn't respond to anything. It's a mouth with no brain.

GPT-Realtime-2 is a reasoning model built for live voice.

It listens to you speaking. It understands what you mean; words and the request behind them.

But the biggest difference to Realtime one model is the tool calling.

It can call tools mid-conversation. This means checking your calendar, updating a database, pulling a flight status, update CRM, draft email ...

It handles interruptions and corrections without losing track of where the conversation was going. And it responds in spoken audio, not as a piece of text handed off to a separate TTS engine.

The whole loop, listen, reason, act (call tools), respond, happens in real time.

Some specifics worth knowing:

-

128K context window is four times what the previous version had. Longer, more complex sessions without the model forgetting where it started.

-

Configurable reasoning effort. You can dial this from minimal to

xhighdepending on whether you need a fast reply or a deeply thought-through one. Low is the default to keep latency down. -

Preambles. While the model is working on something, it can say things like "let me check that" — so the user knows it's thinking, not frozen.

-

Parallel tool calls. It can hit multiple tools at once and tell you what it's doing: "checking your calendar and looking that up now."

-

Tone control. It can speak calmly when there's a problem, warmly when someone is frustrated, upbeat when confirming a win. That's not TTS. That's judgment.

Benchmark numbers: GPT-Realtime-2 scores 15.2% higher on Big Bench Audio for audio intelligence compared to the previous model, and 13.8% higher on Audio MultiChallenge for instruction following.

OpenAI also launched two companions alongside it — GPT-Realtime-Translate (live translation across 70+ input languages into 13 output languages) and GPT-Realtime-Whisper (streaming transcription that works as you speak, not after you've finished). They're designed to work together.

How businesses are already using Realtime

Zillow is building an assistant that lets you describe what you want in a home out loud — "find me homes within my BuyAbility, avoid busy streets, schedule a tour for Saturday" ... and the model listens, reasons, uses tools, and acts on it.

That's voice-to-action. You're not filling out filters, bit have a conversation that completes a task. Like with a person.

Deutsche Telekom is building customer support where callers speak in whatever language they're comfortable in, and the model translates the conversation in real time. Not a transcript after the fact. Live. While the conversation is happening.

Priceline is going even further, working toward a voice interface for entire trips. Searching for flights and hotels conversationally, handling changes (like adjusting a hotel booking after a flight delay), getting real-time TSA wait times, then switching into translation mode when you land. One voice thread for the whole journey.

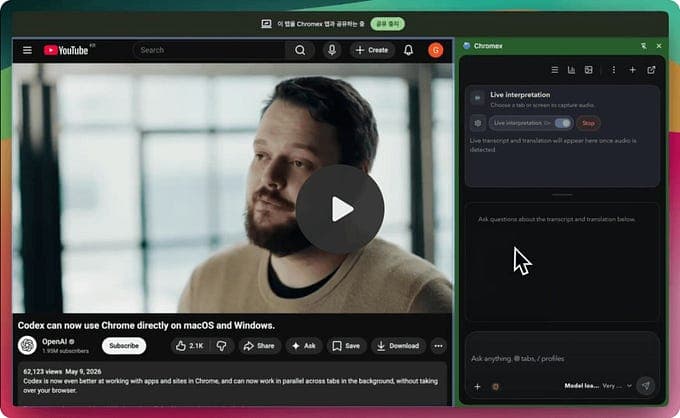

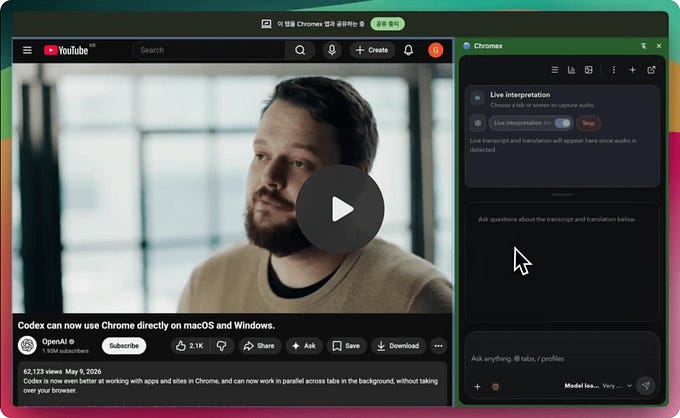

And on the indie/developer side: someone already built a Chrome extension called Chromex that uses GPT-Realtime-2 to translate audio from YouTube videos, live streams, and browser meetings in real time.

You can ask it to summarise the conversation while it's still going, pull out key points, or take notes. One developer, one extension, language barriers largely gone for anything playing in your browser.

That last one is the one I keep thinking about.

Ideas for how you can actually use Realtime-2

Customer support that doesn't suck

If you run any kind of service business, a voice agent built on GPT-Realtime-2 can handle inbound questions, look things up in real time, and respond in the caller's language.

The model handles interruptions, corrections, and tone shifts which is basically what every support call involves.

Internal meeting assistant

Drop a Realtime-powered bot into your team meetings. It listens, takes structured notes, flags action items as they come up, and can answer quick questions mid-call without anyone having to stop and type into a search bar.

Combined with the transcription model, you get searchable notes automatically.

Accessibility layer for your product

If your app currently requires typing to navigate, a voice layer built on this gives users who can't or don't want to type a full alternative path.

Driving, walking, hands full — voice-to-action covers all of it.

Real-time language support for clients

If you work with international clients and language is a friction point, even a simple setup that translates your spoken briefings or calls live removes a real barrier.

Deutsche Telekom is doing this at scale. You can do a version of it for your next client call.

Content creation on the move

Dictate ideas, have the model structure and refine them as you speak. Not just transcription, but active collaboration while you think out loud.

I haven't built this one yet, but the context window is finally big enough that it would actually hold the thread of a longer piece.

Keep in mind

Its not cheap!

GPT-Realtime-2 costs 30 € per million audio input tokens and 60 € per million audio output tokens. For a prototype or an internal tool, that's fine. For a high-volume consumer product, you need to model this carefully before you ship.

The knowledge cutoff is September 2024. For anything that requires current information, you need to pair it with tool calls to live data sources which the model supports, but you have to build that part.

This technology is somewhat ready, but most of the interesting applications still need to be built. The model can keep the conversation going, building something that's actually worth having a conversation with ... thats a different story :)