So, I watch a lot of YouTube. Probably too much.

But most of it is AI related; product walkthroughs, interviews, demos from the Anthropic and OpenAI crowd, random channels shipping something new that week.

The problem was always the same. I’d watch a 45-minute video, find three good nuggets in it, and then a week later I couldn’t remember which video those nuggets came from.

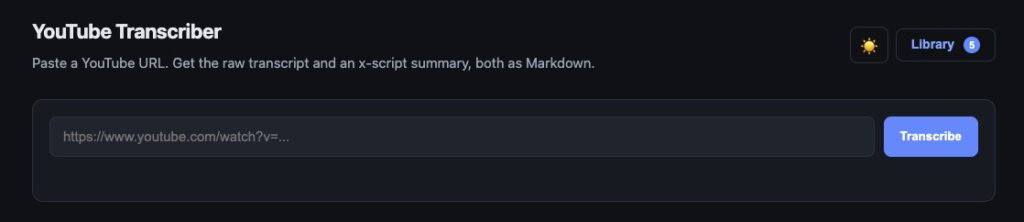

So I built myself a YouTube Transcriber with Claude Code.

Runs locally. Nothing shipped, nothing public, just a tool that lives inside my own research setup.

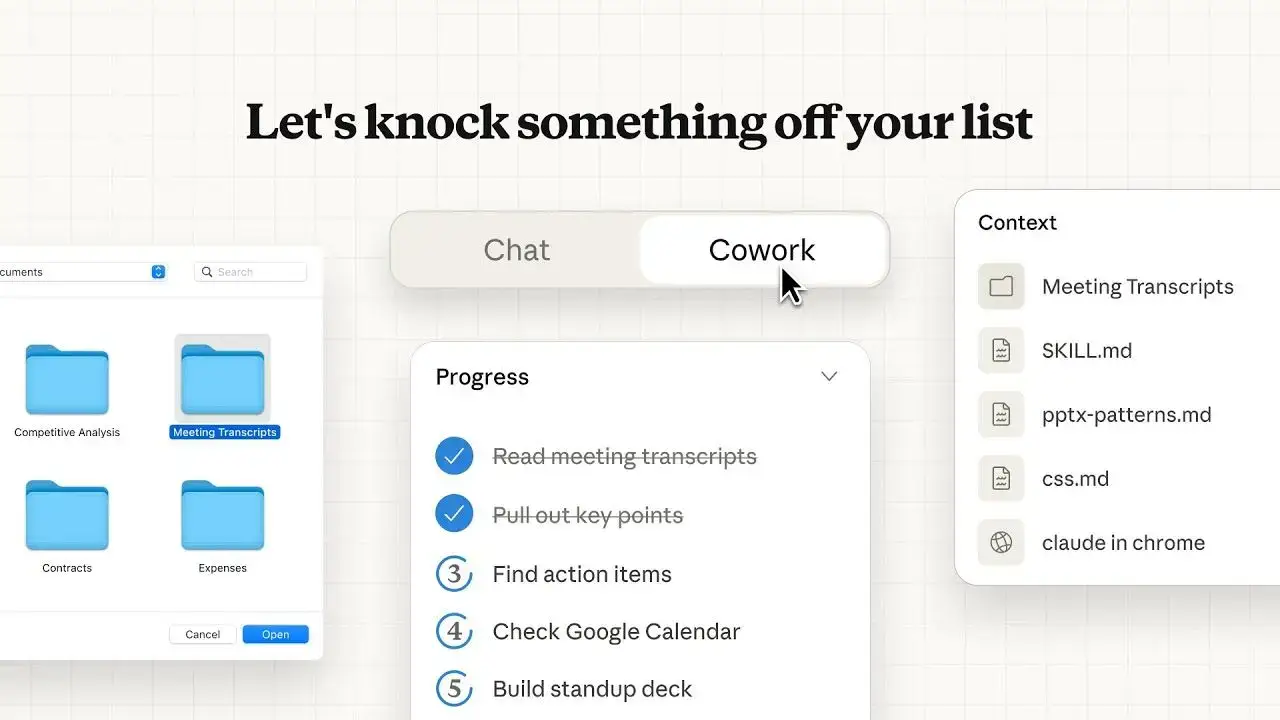

How YouTube Transcriber works:

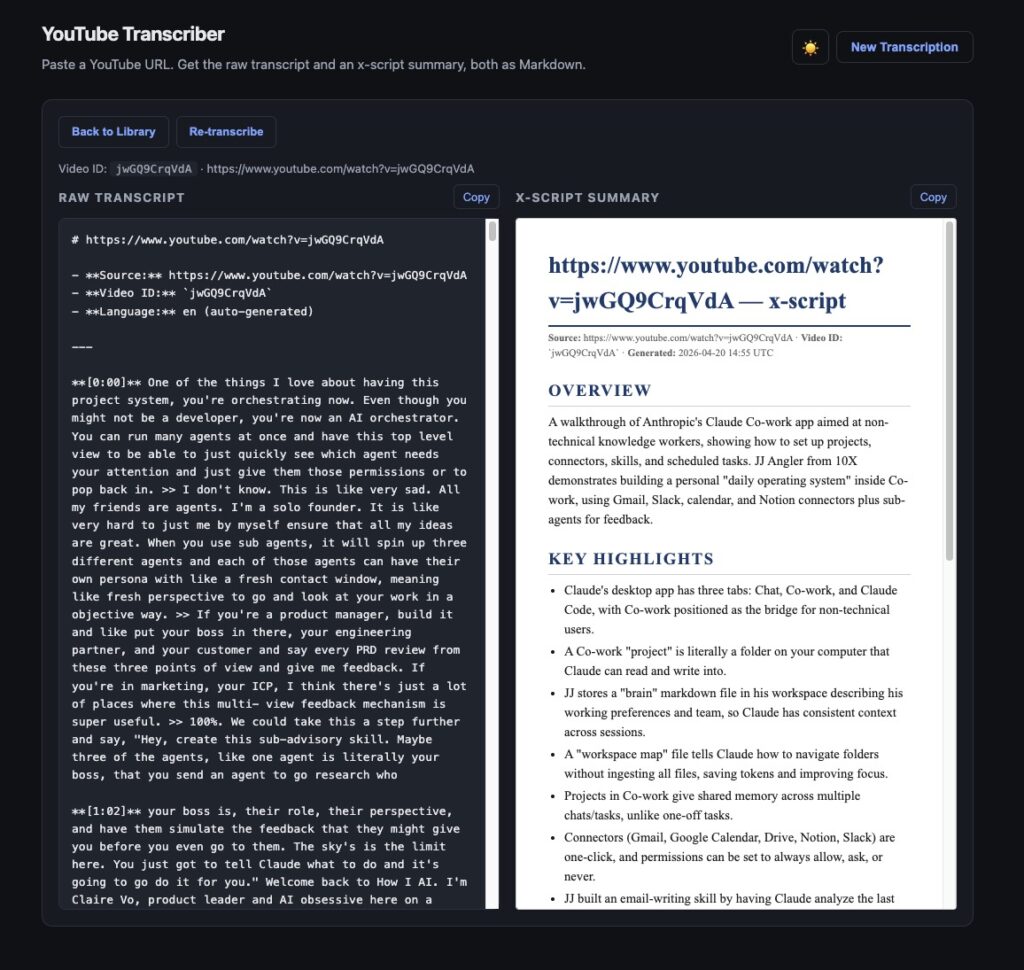

- Paste a YouTube URL

- The app pulls the raw transcript

- Claude via API generates a summary on the side — overview, key highlights, anything worth remembering

- Both get saved as Markdown in a local library I can reuse for blogposts and ideas

And that’s it.

With this one i didn’t waste tokens to design it in any way.

The x-script is the actually useful part. Raw transcripts are messy to skim. Pure summaries cut too much out. Having both side by side means I read the summary first, and if something clicks, I jump straight into the raw transcript at the right timestamp.

Running it locally also means I didnt bother hooking it my server. It lives right next to my other content and research tools, inside one clean workflow.

What I’m still figuring out is the library side. Right now it just grows, so the next iteration will probably be some kind of grouping. By topic tags and a search layer across the scripts, so I can pull up “everything I’ve transcribed about Claude Code” in one click for instance.

How its built?

This one was fun and quick little weekend build. I used my now quite refined AI agents flow proces. I wrote about it recently, and I’ve been building also live sites with it.

See brainylabs new product page, game library and framework landing. All of them were built with Claude Code AI agents process i wrote about here.

Access and use agents – see listing on Github.